Tools to Detect Security Risks Across the Entire SDLC: A Deep Dive

TL;DR

Security teams face a fundamental challenge today: AI-accelerated development moves faster than manual security processes can handle. Engineering teams ship code daily, sometimes hourly, while security reviews remain stuck in quarterly cycles or ad-hoc requests. The gap between what gets built and what gets reviewed keeps widening.

This article breaks down the tools and approaches available for detecting security risks across the entire software development lifecycle (SDLC). We'll cover what works, what doesn't, and where the industry is heading. If you're a security architect, AppSec engineer, or security leader trying to scale security coverage, this is for you.

Why SDLC Security Coverage Remains a Hard Problem

Design Flaws

The traditional approach to application security focused on testing code after it was written. Static Application Security Testing (SAST) scans source code. Dynamic Application Security Testing (DAST) probes running applications. Software Composition Analysis (SCA) checks dependencies. These tools matter, but they all share a limitation: they find problems after the architecture decisions are already locked in.

Consider a feature that stores sensitive health data in a new microservice. If the team chose the wrong encryption approach, picked an insecure communication protocol, or failed to consider data residency requirements, no amount of code scanning will fix those architectural flaws cheaply. The cost of fixing security issues increases exponentially as you move from design to development to production. A threat identified during design review might take an hour to address. The same threat found in production could require weeks of rework.

The Velocity Problem

Modern engineering teams operate on continuous delivery models. Even without agentic coding, a mid-sized company with 500 developers might still push hundreds of changes per week across dozens of services. Each change carries potential security implications. The math doesn't work for manual review — only high-visibility features get reviewed. Security teams triage constantly, hoping they catch the most dangerous changes while smaller modifications slip through unexamined.

The AI Code Generation Acceleration

Today, tools like GitHub Copilot and Cursor have changed the equation again. Developers now generate code faster than ever. A function that took 30 minutes to write now takes 5 minutes with AI assistance. But AI coding assistants don't inherently understand your organization's security requirements, compliance obligations, or architectural standards. They produce functional code that may or may not align with your security posture.

This creates a new attack surface: AI-generated code that works correctly but introduces vulnerabilities because the AI wasn't context-aware. Your payment processing service needs PCI-DSS compliance. Your healthcare application needs HIPAA safeguards. The AI assistant doesn't know that unless you tell it, and developers often don't think to specify security requirements in their prompts.

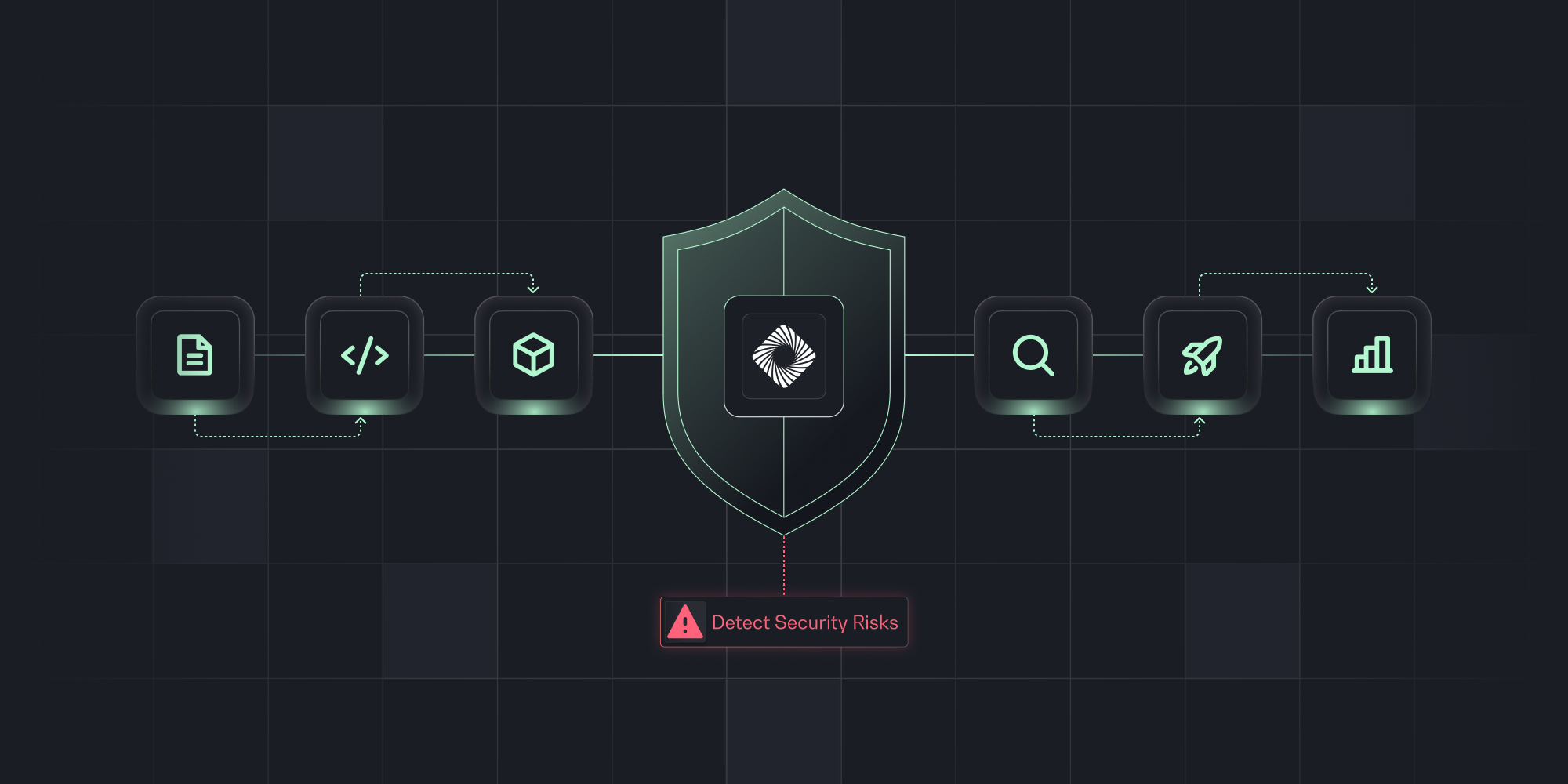

Mapping Security Tools to SDLC Phases

Design-Stage Security: Where Most Organizations Fail

Design-stage security review catches architectural flaws before they become expensive problems. Threat modeling, security architecture review, and risk analysis all happen here. Yet this phase receives the least tooling investment in most organizations.

The Manual Threat Modeling Bottleneck

Traditional threat modeling follows a process like STRIDE (Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service, Elevation of Privilege) or PASTA (Process for Attack Simulation and Threat Analysis). A security architect reviews system diagrams, identifies assets and trust boundaries, brainstorms potential threats, and documents mitigations.

This works well for large, well-defined projects with clear timelines. It fails for agile development where requirements evolve weekly and features ship in two-week sprints. By the time a manual threat model is complete, the architecture may have already changed.

Gen 1 Threat Modeling Tools: Diagram-Centric Approaches

First-generation threat modeling platforms like ThreatModeler, IriusRisk, and SD Elements attempted to speed up manual processes. They provide structured workflows for creating data flow diagrams, identifying threats based on component types, and generating security requirements.

These tools improved consistency and documentation. A threat model created in IriusRisk follows a predictable format and covers standard threat categories. But they still require significant manual effort:

- Someone must create or update the system diagrams

- Security architects must interpret results and prioritize findings

- Integration with development workflows remains limited

- No automatic scanning of planned work in Jira or other ALM tools

IriusRisk describes itself as an automated threat modeling platform that helps identify and mitigate security risks early in the SDLC based on system architecture diagrams and questionnaires. The key phrase is "based on diagrams and questionnaires." If nobody creates the diagram or fills out the questionnaire, no analysis happens.

AI-Native Design Review: The Next Generation

A newer category of tools uses AI to automate design-stage security analysis. Instead of waiting for manual diagram creation, these platforms scan development planning tools (Jira, Confluence, Azure DevOps, Linear) to identify security-relevant work automatically.

The approach works like this:

- Continuous scanning: The platform monitors your ALM tools for new epics, stories, and design documents

- Context discovery: AI analyzes PRDs, ERDs, architecture docs, and related artifacts to understand what's being built

- Risk identification: The system identifies potential security risks based on the planned changes

- Automated analysis: AI generates threat analysis, data flow diagrams, and mitigation recommendations

- Workflow integration: Findings appear in developer tools, not separate security portals

This shifts the model from "security must initiate reviews" to "reviews happen automatically for all planned work." Coverage expands from 10-15% to nearly 100% of development activity.

What separates mature AI-native platforms from earlier entrants in this category is what happens across reviews over time. The most capable platforms maintain an AI memory layer — accumulating past reviews, approved architectural patterns, policy resolutions, and design decisions — and apply that institutional knowledge automatically to new work. A risk that was assessed and mitigated six months ago informs how a similar design is evaluated today. That compounding organizational context is what distinguishes a platform that gets smarter over time from one that simply automates a static checklist.

Static Analysis Tools: Finding Bugs in Source Code

Static Application Security Testing (SAST) analyzes source code without executing it. These tools look for patterns that indicate vulnerabilities: SQL injection, cross-site scripting, buffer overflows, insecure cryptography, and hundreds of other issue types.

Leading SAST Platforms:

- Checkmarx: Enterprise SAST, 25+ programming languages, integrates with major CI/CD systems

- SonarQube: Code quality and security analysis; open-source Community Edition plus commercial tiers

- Semgrep: Lightweight, pattern-based scanning with YAML rule format; fast enough for pre-commit hooks

SAST Limitations:

- False positive rates: 30-70% depending on tool, language, and codebase

- Misses: business logic flaws, configuration issues, complex auth/authz gaps, second-order vulnerabilities

The Structural Blind Spot: Context SAST Can't See

Beyond false positives and missed vulnerability classes, SAST has a more fundamental constraint: it's repository-scoped. A scanner analyzes a specific codebase in isolation. It doesn't know what the feature was supposed to do, how data flows across service boundaries, what architectural decisions were made upstream, or how one finding connects to another across the system.

This means SAST identifies code-level symptoms but can't reason about attack chains. A SQL injection finding is flagged; whether that injection sits inside a service that was also designed with overly permissive IAM and an undocumented external API integration — a materially different risk profile — is invisible to the scanner. Closing that gap requires context that lives outside the repository, in the planning layer where design decisions were originally made.

The Rest of the Build-Phase Toolchain

SAST addresses code-level vulnerabilities, but a complete build-phase security program requires three additional categories running in parallel.

Software Composition Analysis (SCA) addresses the reality that modern applications are 80-90% third-party code. SCA tools compare dependency manifests against vulnerability databases, flagging known-vulnerable packages with CVE identifiers and remediation paths. Advanced capabilities include reachability analysis (is the vulnerable code path actually executed?), license compliance checking, and malicious package detection for supply chain attacks. Key tools: Snyk, Dependabot (GitHub-native), OWASP Dependency-Check.

Secrets Detection catches hardcoded credentials before they reach version control — or scans history to find what already slipped through. Tools like GitLeaks and TruffleHog use pattern matching against known secret formats, entropy analysis for random-looking strings, and full Git history traversal. Pre-commit hooks using tools like the pre-commit framework prevent secrets from entering repositories at all, which is significantly cheaper than rotating compromised credentials after the fact.

Infrastructure as Code (IaC) Scanning addresses configuration vulnerabilities in cloud infrastructure defined as Terraform, CloudFormation, or Kubernetes manifests — risks that application scanners miss entirely. An S3 bucket with public access or a security group permitting unrestricted SSH represents serious exposure that no amount of code scanning will surface. Checkov covers 750+ policies across AWS, Azure, and GCP; Trivy handles both container and IaC scanning in a single tool.

Dynamic Application Security Testing (DAST) probes running applications from the outside, simulating attacker behavior without source code access. Where SAST finds patterns in code, DAST finds vulnerabilities that only manifest at runtime — authentication bypasses, session management flaws, injection points that static analysis missed. OWASP ZAP provides open-source coverage; Burp Suite dominates the commercial market for both automated scanning and manual penetration testing.

Runtime Security and ASPM

Runtime Security monitors applications during execution to detect attacks in progress. RASP (Runtime Application Self-Protection) instruments applications from inside the running process, seeing actual execution context and blocking malicious requests without affecting legitimate traffic. For containerized environments, Falco monitors system calls from containers and fires alerts on suspicious behavior — a shell spawned in a container, sensitive files accessed unexpectedly, outbound connections to unusual ports. Aqua Security provides comprehensive container security across the full lifecycle.

Application Security Posture Management (ASPM) sits above all other tools in the stack, aggregating findings from SAST, DAST, SCA, IaC, and secret scanners into a unified risk view. The value is correlation and prioritization: a vulnerability that appears across multiple tool outputs can be deduplicated, ranked by actual business impact, and routed to the right engineering team. Without an aggregation layer, security teams drown in disconnected findings across disconnected dashboards. Notable platforms: Legit Security, Apiiro, ArmorCode, DefectDojo (open source).

Where Prime Security Fits In

Most SDLC security tooling is built to catch problems. SAST finds vulnerable code. SCA flags risky dependencies. DAST probes running applications. These tools are valuable, but they share a structural constraint: they operate downstream from the decisions that matter most. By the time a scanner runs, the architecture is already set, the patterns are already baked in, and fixing what's wrong costs multiples of what it would have cost to get it right at the design stage.

Prime is built for a different intervention point — and in some cases, a different kind of intervention entirely.

Design: Securing Every Product Decision Before a Line of Code Is Written

At the design phase, Prime acts as an AI Security Architect embedded in the planning layer. Rather than waiting for a developer to submit a design doc or trigger a review request, Prime continuously monitors Jira, Confluence, and other ALM tools — scanning epics, stories, and linked documentation as they're written.

For every planned task, large or small, Prime analyzes the business and technical context: what's being built, what data it touches, what systems it integrates with, and what compliance obligations apply. It flags risks early and delivers actionable mitigations directly into the workflow, in Jira or in the AI coding tools developers are already using.

Critically, Prime distinguishes signal from noise. It surfaces what matters — authorization logic errors, weak session management, unapproved external entities, unrestricted network access, audit and compliance violations — without drowning developers in findings they can't prioritize or act on.

The result is design-stage coverage that scales. Instead of the 10–15% of planned work that typically gets reviewed through manual processes, Prime covers nearly 100% of development activity continuously. Security stops being a gate at the end of the design cycle and starts being a built-in property of how decisions get made.

Development: AI Coding Guardrails That Understand Your Organization

AI coding tools like Cursor and GitHub Copilot accelerate development. They also generate code without any awareness of your organization's security requirements, compliance posture, or architectural standards. A developer prompting Copilot for a new authentication flow gets code that's syntactically correct and functionally reasonable — and potentially misaligned with every internal security policy your team has spent years enforcing.

Prime addresses this through its MCP-based integration with VSCode IDEs, connecting the context it has accumulated about your organization's design decisions and security requirements directly into the AI code generation workflow. When a developer writes code with Cursor or Claude Code, Prime's guidance is already present — not as a post-generation review but as context injected at the point of generation. Secure patterns get reinforced. Organizational requirements get reflected in what the AI produces. Vulnerabilities that would otherwise be generated don't get written in the first place.

Build: Context-Aware Attack Chain Analysis That SAST Can't Provide

Traditional SAST tools are repository-scoped. They analyze a specific codebase, apply rules to identify vulnerability patterns, and produce a list of findings. This is useful. It's also inherently limited: a SAST scanner doesn't know what the feature was supposed to do, how data flows across service boundaries, what design decisions were made upstream, or how one finding connects to another across the system.

Prime operates with the full context of the planned work — the original Jira tickets, the architecture decisions, the PRDs, the linked design documents, the past reviews from similar features. When vulnerability findings surface at the build phase, Prime can map them against that context to identify attack chains that a code-scoped scanner would never surface. A standalone SQL injection finding is one thing. A SQL injection in a service that was also designed with overly permissive IAM and an undocumented external API integration is a different risk profile entirely. Prime can reason across that full picture — not just flag the code-level symptom.

Building a Complete SDLC Security Toolchain

Recommended stack for midsize organizations (200-1000 developers):

Design Phase:

- AI-native design review platform with Jira/Confluence integration (IriusRisk, Prime Security, ThreatModeler)

Development Phase:

- IDE security plugins (Prime Security, SonarLint, Snyk IDE)

- Pre-commit hooks for secrets detection

- AI code generation guardrails

Build Phase:

- SAST in CI (Checkmarx, Prime Security, SonarQube, or Semgrep)

- SCA (Snyk, Dependabot)

- Secrets scanning (GitLeaks, TruffleHog)

- IaC scanning (Checkov, Trivy)

Test Phase:

- DAST (OWASP ZAP, Burp Suite)

- Container image scanning (Trivy, Aqua)

Production Phase:

- Runtime monitoring (Falco for Kubernetes)

- RASP for high-risk applications

Aggregation Layer:

- ASPM platform for unified visibility

Integration patterns that work:

- Fail fast, fail informatively

- Baseline and suppress existing issues; enforce on new findings

- Right-size scanning (incremental for PRs, comprehensive for main)

- Push results to developer-facing tools, not just security dashboards

The DIY Trap: Why a Generic LLM Alone Won't Solve SDLC Security

Generic LLM limitations for security analysis:

- Hallucination: confident but incorrect findings

- No continuous scanning: requires manual prompting per review

- No institutional memory: each conversation starts fresh

- No validation loop: no tracking that mitigations were implemented

- No aggregation: can't query overall risk posture across products

Building these capabilities on top of generic LLMs requires substantial engineering investment that often exceeds purpose-built tool pricing. The five gaps above — continuous scanning, institutional memory, validation, aggregation, and reliable accuracy — aren't incidental limitations. They're the core capabilities that purpose-built security platforms are designed to provide. Organizations that attempt to close them with prompt engineering and custom tooling typically find the maintenance burden grows faster than the security value delivered.

Measuring SDLC Security Program Effectiveness

Coverage Metrics:

- % of repositories with SAST scanning enabled

- % of planned development work receiving design review

- % of container images scanned before deployment

- % of dependencies monitored for vulnerabilities

Efficiency Metrics:

- Mean time from finding to remediation (MTTR)

- False positive rate by tool

- Security review cycle time

- Developer time spent on security fixes

Risk Metrics:

- Critical/high vulnerabilities in production

- Security debt trend

- Vulnerabilities caught in design vs. development vs. production

- Compliance control coverage

Future Directions

The capabilities shaping the next generation of SDLC security aren't predictions. The tools delivering them exist today:

- AI-native platforms: reason about architecture, threat models, and business risk — not just code patterns

- Design-stage automation: continuous scanning of Jira, Confluence, and design docs surfaces risks before code is written, not after it's reviewed

- AI code generation security: guardrails that inject organizational security context directly into Copilot and Cursor workflows at the point of generation

- Continuous posture management: real-time risk visibility across all planned development work, not periodic point-in-time assessments

- Developer-first experiences: security that meets developers in their existing tools — IDE, Jira, pull request — with less noise and clearer remediation

Organizations that treat these as future aspirations are already behind teams using them in production today.

Frequently Asked Questions

What is the best tool to detect security risks across the entire SDLC?

No single tool covers all SDLC phases effectively. Organizations need a combination: design review platforms for the planning phase, SAST and SCA for the development and build phases, DAST for testing, IaC scanning for infrastructure, and runtime monitoring for production. AI-native platforms like Prime Security that integrate with development planning tools (Jira, Confluence) provide the broadest coverage — spanning design-stage review, AI coding guardrails at the development phase, and context-aware attack chain analysis at build — while ASPM platforms aggregate findings from point tools into a unified view.

How do SAST and DAST tools differ in detecting security vulnerabilities?

SAST (Static Application Security Testing) analyzes source code without executing it, identifying vulnerabilities through pattern matching and data flow analysis. It finds issues early but produces more false positives, misses runtime-specific vulnerabilities, and — critically — operates without the design-layer context needed to reason about attack chains across service boundaries. DAST (Dynamic Application Security Testing) probes running applications by sending attack payloads and analyzing responses. It finds vulnerabilities that only manifest at runtime but requires a deployed application and may miss issues in code paths that aren't exercised during testing. Effective security programs use both approaches, and complement them with design-stage review to catch architectural risks before either scanner runs.

Which SDLC phase is most neglected for security tooling?

The design and requirements phase receives the least security tooling investment. Most organizations focus on code scanning (SAST, SCA) during build phases but perform minimal design-stage security review. Industry data suggests only 10-15% of planned development work receives security design review. This gap is significant because architectural security flaws are exponentially more expensive to fix once code is written. AI-native design review tools address this gap today by continuously scanning development planning tools for security-relevant work — without waiting for a developer to initiate a review.

What are the main categories of DevSecOps tools for SDLC security?

The main DevSecOps tool categories include: Design Review and Threat Modeling tools (IriusRisk, ThreatModeler, Prime Security), IDE security plugins and AI coding guardrails (Prime Security, SonarLint, Snyk IDE), Static Application Security Testing (Checkmarx, SonarQube, Semgrep, Prime Security), Software Composition Analysis (Snyk, Dependabot, OWASP Dependency-Check), Dynamic Application Security Testing (OWASP ZAP, Burp Suite), Secrets Detection (GitLeaks, TruffleHog), Infrastructure as Code Scanning (Checkov, Trivy, tfsec), Container Security (Aqua Security, Trivy), Runtime Protection (Falco, Contrast Security), and Application Security Posture Management (Legit Security, Apiiro).

How can organizations scale security reviews to match development velocity?

Organizations scale security reviews through automation and prioritization. AI-native design review tools can analyze PRDs and architecture documents automatically, completing reviews in minutes instead of hours. Continuous scanning of ALM tools (Jira, Azure DevOps) identifies security-relevant work without manual triage. AI coding guardrails — delivered via IDE integrations like Prime Security's MCP-based VSCode integration — inject organizational security requirements directly into AI code generation workflows, preventing vulnerabilities at the point of creation rather than catching them downstream. Risk-based prioritization focuses human review time on high-impact changes, and workflow integration (IDE plugins, pull request comments, Jira tasks) provides immediate feedback without requiring developers to context-switch to separate security portals.

What security risks does AI-generated code introduce to the SDLC?

AI coding assistants like GitHub Copilot and Cursor generate functional code that may not align with organizational security requirements. They don't inherently know about PCI-DSS, HIPAA, or company-specific security policies. This creates risks including: generated code with vulnerable patterns, missing input validation, hardcoded credentials suggested in examples, insecure default configurations, and failure to implement required security controls. Organizations address this through AI code generation guardrails that inject organizational security context directly into the AI workflow at the point of generation — ensuring generated code reflects the security requirements developers might not think to specify in their prompts.

Why isn't a generic LLM sufficient for SDLC security analysis?

Generic LLMs lack critical capabilities for production security analysis: they hallucinate security issues (both false positives and dangerous false negatives), don't continuously scan development work, have no institutional memory of your architecture or past decisions, provide no validation that mitigations were implemented, and can't aggregate risk posture across products. Building these capabilities requires substantial engineering investment for guardrails, domain fine-tuning, integration plumbing, and ongoing maintenance that typically exceeds the cost of purpose-built security tools — and still doesn't produce the organizational context that makes reviews meaningful.

What metrics should security teams track for SDLC security programs?

Effective SDLC security programs track coverage metrics (percentage of repositories scanned, percentage of work receiving design review), efficiency metrics (mean time to remediation, false positive rates, review cycle time), and risk metrics (critical vulnerabilities in production, security debt trend, vulnerabilities caught by phase). The ratio of vulnerabilities found in design vs. development vs. production indicates program maturity. Lower ratios toward production suggest earlier detection, which reduces remediation costs.

How do first-generation threat modeling tools compare to AI-native design review platforms?

First-generation tools like ThreatModeler, IriusRisk, and SD Elements are diagram-based and require significant manual effort to create system models and interpret results. They improved documentation consistency but couldn't match modern development velocity. AI-native platforms automate the entire process: they scan ALM tools to discover planned work, analyze design documents automatically, generate data flow diagrams from artifacts, and deliver findings directly to developer workflows. The most capable also maintain AI memory across reviews — accumulating organizational context, past decisions, and approved patterns that compound over time. This expands coverage from 10-15% of work reviewed to nearly 100%, without proportional headcount increases.

What integration points are essential for SDLC security tools?

Essential integration points include: source code repositories (GitHub, GitLab, Bitbucket) for code scanning triggers, CI/CD pipelines (Jenkins, GitHub Actions, Azure DevOps) for automated scanning during builds, issue tracking systems (Jira, Azure DevOps, Linear) for finding routing and remediation tracking, development planning tools (Jira, Confluence) for design-stage risk discovery, IDE platforms (VS Code, IntelliJ) for real-time developer feedback, and communication tools (Slack, Teams) for alerting and collaboration. Deep integration with Jira and Confluence is particularly valuable for design-stage security automation — enabling proactive scanning of planned work rather than reactive review of completed features.

Summary Reference Table: Tools by SDLC Phase

.png)

.png)